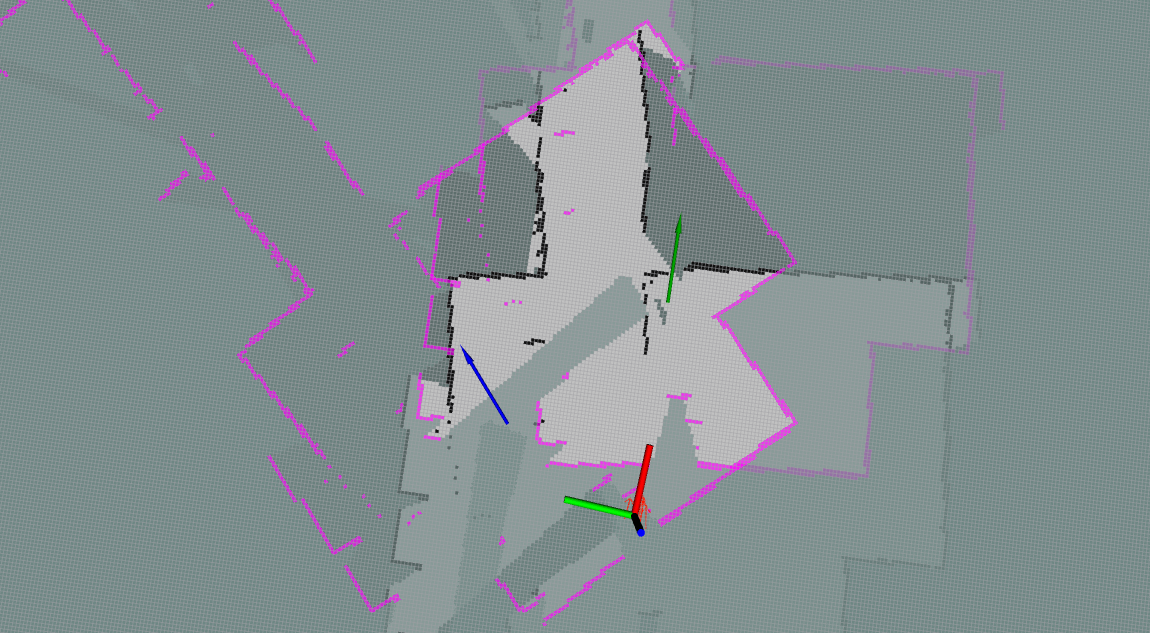

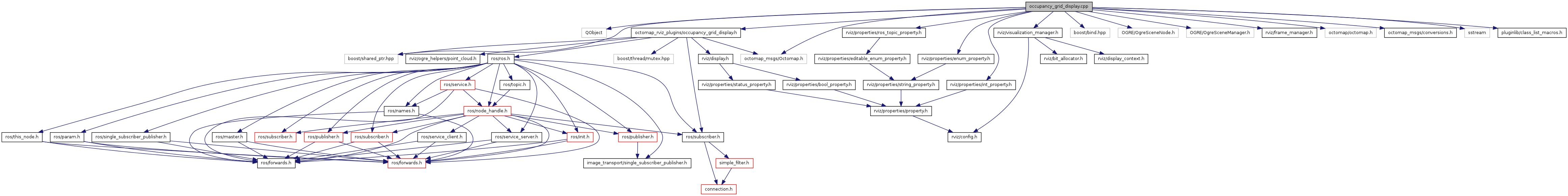

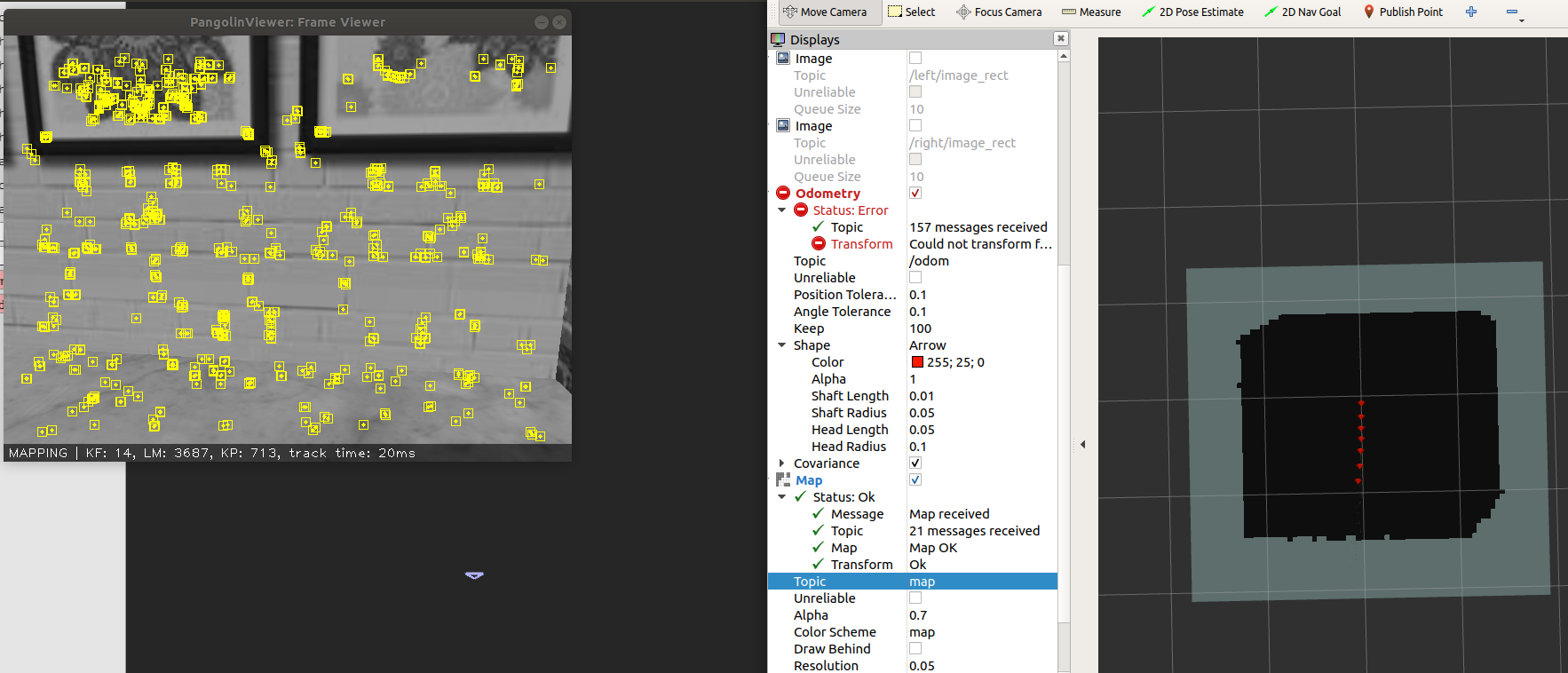

The WillowGarage world and RGB output of the Kinect sensor can be seen in the Gazebo screenshot below. Additionally, the WillowGarage world was loaded to provide an indoor environment suitable to mapping. The robot must publish sensor data using the correct ROS Message types.įor simulating this application, the original 3DR Kit C Gazebo model was modified to include a Kinect sensor mounted on the front arm of the quadrotor. This laser is used for map building and localization. It requires a planar laser mounted somewhere on the mobile base.The robot have a tf transform tree in place so that the robot publishes information about the relationships between the positions of all the joints and sensors.The Navigation Stack was developed on a square robot, so its performance will be best on robots that are nearly square or circular. The shape of the robot must either be a square or a rectangle.It assumes that the mobile base is controlled by sending desired velocity commands to achieve in the form of: x velocity, y velocity, theta velocity. The navigation stack can only handle a differential drive and holonomic-wheeled robots.The Navigation Stack needs to be configured for the shape and dynamics of a robot to perform at a high level and there are several pre-requisites for navigation stack use. This integration can be visualized below. The Navigation Stack will rely on the map_server package for the 2D map, the amcl package for localization in that map, sensor and odometry messages from the quadrotor, and the move_base packages to fuse all the messages in order to output a desired velocity command. The ROS Navigation Stack is a 2D navigation stack that takes in information from odometry, sensor streams, and a goal pose and outputs safe velocity commands that are sent to a mobile base. The ROS Navigation Stack combines all of these requirements into a complete sense-plan-act system. Therefore, obstacle avoidance and detection systems are developed as methods of continually updating the map. However, in the real world, the environment is dynamic and sensor measurements are noisy.

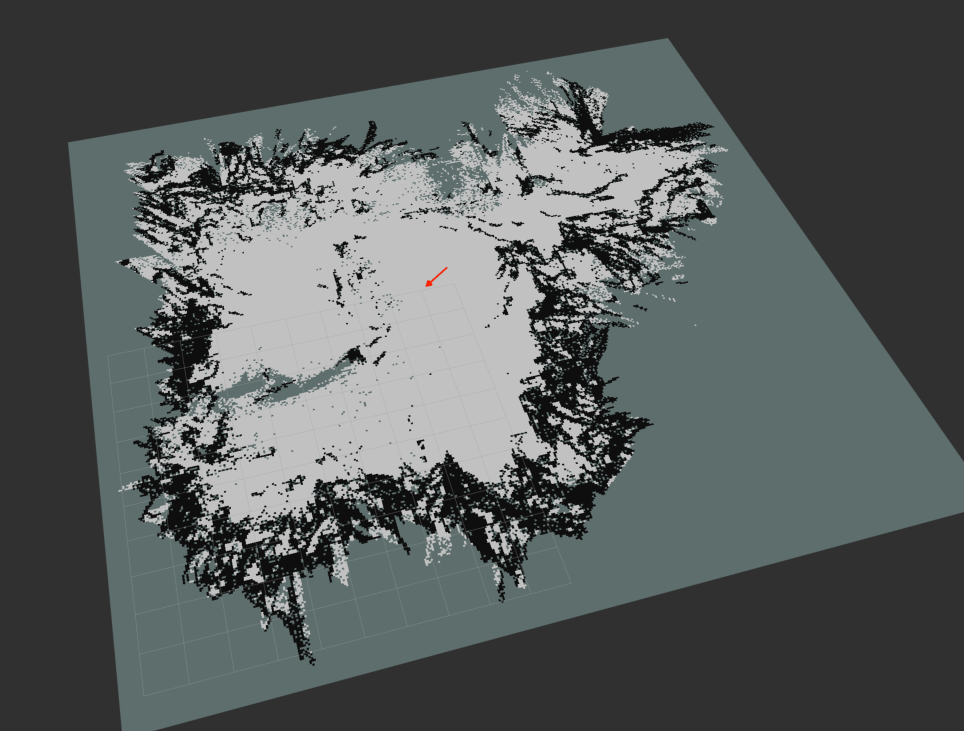

In an ideal world, the map accurately reflects the environment, the environment is stable, and the localized estimate of the quadrotor’s pose in that map is accurate. It is also possible to use these point clouds to emulate a 2D laser scan output. The Kinect outputs point clouds which can be used by the SLAM algorithms to build the map of the environment. This sensor, a Microsoft Kinect, contains stereo cameras as well as a depth sensor. Instead, a RGBD sensor is used as the primary means of measuring the environment.

However, these are often too large in size and mass or power intensive for use on small quadrotors. Typically, lasers are used to create two dimensional maps based on range measurements. This map can be saved and used later for localization and navigation without the need to rebuild the map. Simultaneous Localization and Mapping is the process of both making and updating a map of the environment while also estimating the system’s location in that map. The path from the initial state to the goal state can be a series of waypoints or actions, if a path exists.Ī perfect estimate of the quadrotor’s pose needed to map creation is typically not available, especially in indoor environments. Ultimately, the goal is to develop a system that allows the quadrotor to autonomously reach a desired goal state in the map. To operate in the map, the quadrotor needs to know its position in the map coordinate frame. A map is a representation of the environment where the quadrotor is operating.

Once the quadrotor can reliably and stably navigate the environment based on a series of desired waypoints, the quadrotor system can be used to sense and comprehend it’s surrounding environment. 2D Mapping and Navigation ■ 3D Mapping and Navigation

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed